Differential Ontology

Differential ontology approaches the nature of identity by explicitly formulating a concept of difference as foundational and constitutive, rather than thinking of difference as merely an observable relation between entities, the identities of which are already established or known. Intuitively, we speak of difference in empirical terms, as though it is a contrast between two things; a way in which a thing, A, is not like another thing, B. To speak of difference in this colloquial way, however, requires that A and B each has its own self-contained nature, articulated (or at least articulable) on its own, apart from any other thing. The essentialist tradition, in contrast to the tradition of differential ontology, attempts to locate the identity of any given thing in some essential properties or self-contained identities, and it occupies, in one form or another, nearly all of the history of philosophy. Differential ontology, however, understands the identity of any given thing as constituted on the basis of the ever-changing nexus of relations in which it is found, and thus, identity is a secondary determination, while difference, or the constitutive relations that make up identities, is primary. Therefore, if philosophy wishes to adhere to its traditional, pre-Aristotelian project of arriving at the most basic, fundamental understanding of things, perhaps its target will need to be concepts not rooted in identity, but in difference.

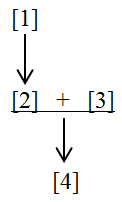

“Differential ontology” is a term that may be applied particularly to the works and ideas of Jacques Derrida and Gilles Deleuze. Their successors have extended their work into cinema studies, ethics, theology, technology, politics, the arts, and animal ethics, among others.

This article consists of three main sections. The first explores a brief history of the problem. The historical emergence of the problem in ancient Greek philosophy reveals not only the dangers of a philosophy of difference, but also demonstrates that it is a philosophical problem that is central to the nature of philosophy as such, and is as old as philosophy itself. The second section explores some of the common themes and concerns of differential ontology. The third section discusses differential ontology through the specific lenses of Jacques Derrida and Gilles Deleuze.

Table of Contents

- The Origins of the Philosophy of Difference in Ancient Greek Philosophy

- Heraclitus

- Parmenides

- Plato

- Aristotle

- Key Themes of Differential Ontology

- Immanence

- Time as Differential

- Critique of Essentialist Metaphysics

- Key Differential Ontologists

- Jacques Derrida as a Differential Ontologist

- Gilles Deleuze as a Differential Ontologist

- Conclusion

- References and Further Reading

- Primary Sources

- Secondary Sources

- Other Sources Cited in this Article

1. The Origins of the Philosophy of Difference in Ancient Greek Philosophy

Although the concept of differential ontology is applied specifically to Derrida and Deleuze, the problem of difference is as old as philosophy itself. Its precursors lie in the philosophies of Heraclitus and Parmenides, it is made explicit in Plato and deliberately shut down in Aristotle, remaining so for some two and a half millennia before being raised again, and turned into an explicit object of thought, by Derrida and Deleuze in the middle of the twentieth century.

a. Heraclitus

From its earliest beginnings, what distinguishes ancient Greek philosophy from a mythologically oriented worldview is philosophy’s attempt to offer a rationally unified picture of the operations of the universe, rather than a cosmos subject to the fleeting and conflicting whims of various deities, differing from humans only in virtue of their power and immortality. The early Milesian philosophers, for instance, had each sought to locate among the various primitive elements a first principle (or archê). Thales had argued that water was the primary principle of all things, while Anaximenes had argued for air. Through various processes and permutations (or in the case of Anaximenes, rarefaction and condensation), this first principle assumes the forms of the various other elements with which we are familiar, and of which the cosmos is comprised. All things come from this primary principle, and eventually, they return to it.

Against such thinkers, Heraclitus of Ephesus (fl. c.500 BCE) argues that fire is the first principle of the cosmos: “The cosmos, the same for all, no god or man made, but it always was, is, and will be, an everlasting fire, being kindled in measures and put out in measures.” (DK22B30) From this passage, we are able to glean a few things. The most obvious divergence is that Heraclitus names fire as the basic element, rather than water, air, or, in the case of Anaximander, the boundless (apeiron). But secondly, unlike the Milesians, Heraclitus does not hold in favor of any would-be origin of the cosmos. The universe always was and always will be this self-manifesting, self-quenching, primordial fire, expressed in nature’s limitless ways. So while fire, for Heraclitus, may be ontologically basic in some sense, it is not temporally basic or primordial: it did not, in the temporal or sequential order of things, come first.

However, like his Milesian predecessors, Heraclitus appears to provide at least a basic account for how fire as first principle transforms: “The turnings of fire: first sea, and of sea, half is earth, half lightning flash.” (DK22B31a) Elsewhere we can see more clearly that fire has ontological priority only in a very limited sense for Heraclitus: “The death of earth is to become water, and the death of water is to become air, and the death of air is to become fire, and reversely.” (DK22B76) Earth becomes water; water becomes air; air becomes fire, and reversely. Combined with the two passages above, we can see that the ontological priority of fire is its transformative power. Fire from the sky consumes water, which later falls from the sky nourishing the earth. Likewise, fire underlies water (which in its greatest accumulations rages and howls as violently as flame itself), out of which comes earth and the meteorological or ethereal activity itself. Thus we can see the greatest point of divergence between Heraclitus and his Milesian forbears: the first principle of Heraclitus is not a substance. Fire, though one of the classical elements, is of its very nature a dynamic element—a vital element that is nothing more than its own transformation. It creates (volcanoes produce land masses; furnaces temper steel; heat cooks our food and keeps us safe from elemental exposure), but it also destroys in countless obvious ways; it hardens and strengthens, just as it weakens and consumes. Fire, then, is not an element in the sense of a thing, but more as a process. In contemporary scientific terms, we would say that it is the result of a chemical reaction, its essence for Heraclitus lies in its obvious dynamism. When we look at things (like tables, trees, homes, people, and so forth), they seem to exemplify a permanence which is saliently missing from our experience of fire.

This brings us to the next point: things, for Heraclitus, only appear to have permanence; or rather, their permanence is a result of the processes that make up the identities of the things in question. Here it is appropriate to cite the famous river example, found in more than one Heraclitean fragment: “You cannot step twice into the same rivers; for fresh waters are flowing in upon you.” (DK22B12) “We step and do not step into the same rivers; we are and are not.” (DK22B49a) These passages highlight the seemingly paradoxical nature of the cosmos. On the one hand, of course there is a meaningful sense in which one may step twice into the same river; one may wade in, wade back out, then walk back in again; this body of water is marked between the same banks, the same land markers, and the same flow of water, and so forth. But therein lies the paradox: the water that one waded into the first time is now completely gone, having been replaced by an entirely new configuration of particles of water. So there is also a meaningful sense in which one cannot step into the same river twice. But it is this particular flowing that makes this river this river, and nothing else. Its identity, therefore (as also my own identity), is an effervescent impermanence, constituted on the basis of the flows that make it up. We peer into nature and see things—rivers, people, animals, and so forth—but these are only temporary constitutions, even deceptions: “Men are deceived in their knowledge of things that are manifest.” (DK22B56) The true nature of nature itself, however, continuously eludes us: “Nature tends to conceal itself.” (DK22B123)

The paradoxical nature of things, (that their identities are constituted on the basis of processes), helps us to make sense of Heraclitus’ proclamation of the unity of opposites, (which both Plato and Aristotle held to be unacceptable). Fire is vital and powerful, raging beneath the appearances of nature like a primordial ontological state of warfare: “War is the father of all and the king of all.” (DK22B53) The very same process-driven nature of things that makes a thing what it is by the same operations tends toward the thing’s undoing as well. As we saw, fire is responsible for the opposite and complementary functions of both creativity and destruction. The nature of things is to tend toward their own undoing and eventual passage into their opposites: “What opposes unites, and the finest attunement stems from things bearing in opposite directions, and all things come about by strife.” (DK22B8).

It is in this sense that all things are, for Heraclitus, one. The creative-destructive operations of nature underlie all of its various expressions, binding the whole of the cosmos together in accordance with a rational principle of organization, recognized only in the universal and timeless truth that everything is constantly subject to the law of flux and impermanence: “It is wise to hearken, not to me, but to the Word [logos—otherwise translatable as reason, argument, rational principle, and so forth], and to confess that all things are one.” (DK22B50). Thus it is that Heraclitus is known as the great thinker of becoming or flux. The being of the cosmos, the most essential fact of its nature, lies in its becoming; its only permanence is its impermanence. For our purposes, we can say that Heraclitus was the first philosopher of difference. Where his predecessors had sought to identify the one primordial, self-identical substance or element, out of which all others had emerged, Heraclitus had attempted to think the world, nothing more than the world, in a permanent state (if this is not too paradoxical) of flux.

b. Parmenides

Parmenides (b. 510 BCE) was likely a young man at the time when Heraclitus was philosophically active. Born in Elea, in Lower Italy, Parmenides’ name is the one most commonly associated with Eleatic monism. While (ironically) there is no one standard interpretation of Eleatic monism, probably the most common understanding of Parmenides is filtered through our familiarity with the paradoxes of his successor, Zeno, who argued, in defense of Parmenides, that what we humans perceive as motion and change are mere illusions.

Against this backdrop, what we know of Parmenides’ views come to us from his didactic poem, titled On Nature, which now exists in only fragmentary form. Here, however, we find Parmenides, almost explicitly objecting to Heraclitus: “It is necessary to say and to think that being is; for it is to be/but it is by no means nothing. These things I bid you ponder./For from this first path of inquiry, I bar you./then yet again from that along which mortals who know nothing/wander two-headed: for haplessness in their/breasts directs wandering understanding. They are borne along/deaf and blind at once, bedazzled, undiscriminating hordes,/who have supposed that being and not-being are the same/and not the same; but the path of all is back-turning.” (DK28B6) There are a couple of important things to note here. First, the mention of those who suppose that being and not-being are the same and not the same hearkens almost explicitly to Heraclitus’ notion of the unity of opposites. Secondly, Parmenides declares this to be the opinion of the undiscriminating hordes, the masses of non-philosophically-minded mortals.

Therefore, Heraclitus, on Parmenides’ view, does not provide a philosophical account of being; rather, he simply coats in philosophical language the everyday experience of the mob. Against Heraclitus’ critical diagnosis that humans (deceptively) see only permanent things, Parmenides claims that permanence is precisely what most people, Heraclitus included, miss, preoccupied as we are with the mundane comings and goings of the latest trends, fashions, and political currents. The being of the cosmos lies not in its becoming, as Heraclitus thought. Becoming is nothing more than an illusion, the perceptions of mortal minds. What is, for Parmenides, is and cannot not be, while what is not, is not, and cannot be. Neither is it possible to even think of what is not, for to think of anything entails that it must be an object of thought. Thus to meditate on something that is not an object is, for Parmenides, contradictory. Therefore, “for the same thing is for thinking and for being.” (DK28B3)

Being is indivisible; for in order to divide being from itself, one would have to separate being from being by way of something else, either being or not-being. But not-being is not and cannot be, (so not-being cannot separate being from being). And if being is separated from being by way of being, then being itself in this thought-experiment is continuous, that is to say, being is undivided (and indivisible): “for you will not sever being from holding to being.” (DK28B4.2) Being is eternal and unchanging; for if being were to change or become in any way, this would entail that in some sense it had participated or would participate in not-being which is impossible: “How could being perish? How could it come to be?/For if it came to be, it was not, nor if it is ever about to come to be./In this way becoming has been extinguished and destruction is not heard of.” (DK28B8.19-21)

Being, for Parmenides, is thus eternal, unchanging, and indivisible spatially or temporally. Heraclitus might have been right to note the way things appear, (as a constant state of becoming) but he was wrong, on Parmenides’ view, to confuse the way things appear with the way things actually are, or with the “steadfast heart of persuasive truth.” (DK28B1.29) Likewise, Parmenides has argued, thought can only genuinely attend to being—what is eternal, unchanging, and indivisible. Whatever it is that Heraclitus has found in the world of impermanence, it is not, Parmenides holds, philosophy. While unenlightened mortals may attend to the transience of everyday life, the path of genuine wisdom lies in the eternal and unchanging. Thus, while Heraclitus had been the first philosopher of difference, Parmenides is the first to assert explicitly that self-identity, and not difference, is the basis of philosophical thought.

c. Plato

It is these two accounts, the Heraclitean and the Parmenidean (with an emphatic privileging of the latter), that Plato attempts to answer and fuse in his theory of forms. Throughout Plato’s Dialogues, he consistently gives credence to the Heraclitean observation that things in the material world are in a constant state of flux. The Parmenidean inspiration in Plato’s philosophy, however, lies in the fact that, like Parmenides, Plato will explicitly assert that genuine knowledge must concern itself only with that which is eternal and unchanging. So, given the transient nature of material things, Plato will hold that knowledge, strictly speaking, does not apply to material things specifically, but rather, to the Forms (eternal and unchanging) of which those material things are instantiations. In Book V of the Republic, Plato (through Socrates) argues that each human capacity has a matter that is proper exclusively to it. For example, the capacity of seeing has as its proper matter waves of light, while the capacity of hearing has as its proper matter waves of sound. In a proclamation that hearkens almost explicitly to Parmenides, knowledge, Socrates argues, concerns itself with being, while ignorance is most properly affiliated with not-being.

From here, the account continues, (with an eye toward Heraclitus). Everything that exists in the world participates both in being and in not-being. For example, every circle both is and is not “circle.” It is a circle to the extent that we recognize its resemblance, but it is not circle (or the absolute embodiment of what it means to be a circle) because we also recognize that no circle as manifested in the world is a perfect circle. Even the most circular circle in the world will possess minor imperfections, however slight, that will make it not a perfect circle. Thus the things in the world participate both in being and in not-being. (This is the nature of becoming). Since being and not-being each has a specific capacity proper to it, becoming, lying between these two, must have a capacity that is proper only to it. This capacity, he argues, is opinion, which, as is fitting, is that epistemological mode or comportment lying somewhere between knowledge and ignorance.

Therefore, when one’s attention is turned only to the things of the world, she can possess only opinions regarding them. Knowledge, reserved only for that domain of being, that which is and cannot not be, for Plato, applies only to the Form of the thing, or what it means to be x and nothing but x. This Form is an eternal and unchanging reality. If one has knowledge of the Form, then one can evaluate each particular in the world, in order to accurately determine whether or not it in fact accords with the principle in question. If not, one may only have opinions about the thing. For example, if one possesses knowledge of the Form of the beautiful, then one may evaluate particular things in the world—paintings, sculptures, bodies, and so forth—and know whether or not they are in fact beautiful. Lacking this knowledge, however, one may hold opinions as to whether or not a given thing is beautiful, but one will never have genuine knowledge of it. More likely, she will merely possess certain tastes on the matter—(I like this poem; I do not like that painting, and so forth.) This is why Socrates, especially in the earlier dialogues, is adamant that his interlocutors not give him examples in order to define or explain their concepts (a pious action is doing what I am doing now…). Examples, he argues, can never tell me what the Form of the thing is, (such as piety, in the Euthyphro). The philosopher, Plato holds, concerns himself with being, or the essentiality of the Form, as opposed to the lover of opinion, who concerns himself only with the fleeting and impermanent.

From this point, however, things in the Platonic account start to get more complicated. “Participation” is itself a somewhat messy notion that Plato never quite explains in any satisfactory way. After all, what does it mean to say, as Socrates argues in the Republic, that the ring finger participates in the form of the large when compared to the pinky, and in the form of the small when compared to the middle finger? It would seem to imply that a thing’s participation in its relevant Form derives, not from anything specific about its nature, but only insofar as its nature is related to the nature of another thing. But the story gets even more complicated in that at multiple points in his later dialogues, Plato argues explicitly for a Form of the different, which complicates what we typically call Platonism, almost beyond the point of recognition, (see, for example, Theaetetus 186a; Parmenides 143b, 146b, and 153a; and Sophist 254e, 257b, and 259a).

On its face, this should not be surprising. If a finger sometimes participates in the form of the large and sometimes in the form of the small, it should stand to reason that any given thing, when looked at side by side with something similar, will be said to participate in the form of the same, while by extension, when compared to something that differs in nature, will be said to participate in the form of the different—and participate more greatly in the form of the different, the more different the two things are. A baseball, side by side with a softball, will participate greatly in the form of the same, (but somewhat in the Form of the different), but when looked at side by side with a cardboard box, will participate more in the Form of the different.

But consistently articulating what a Form of the different would be is more complicated than it may at first seem. To say that the Form of x is what it means to be x and nothing but x is comprehensible enough, when one is dealing with an isolable characteristic or set of characteristics of a thing. If we say, for instance, that the Form of circle is what it means to be a circle and nothing but a circle, we know that we mean all of the essential characteristics that make a circle, a circle, (a round-plane figure the boundary of which consists of points, equidistant from a center; an arc of 360°, and so forth.). By implication, each individual Form, to the extent that it completely is what it is, also participates equally in the Form of the same, in that it is the same as itself, or it is self-identical. But what can it possibly mean to say that the form of the different is what it means to be different and nothing but different? It would seem to imply that the identity of the form of the different is that it differs, but this requires that it differs even from itself. For if the essence of the different is that it is the same as the different, (in the way that the essence of circle is self-identical to what it means to be circle), then this would entail that the essence of the Form of the different is that, to the same extent that it is different, it participates equally in the Form of the same, (or that, like the rest of the Forms, it is self-identical). But the Form of the different is defined by its being absolutely different from the Form of the same; it must bear nothing of the Form of the same. But this means that the Form of the different must be different from the different as well; put otherwise, while for every other conceivable Platonic Form, one can say that it is self-identical, the Form of the different would be absolutely unique in that its nature would be defined by its self-differentiation.

But there are further complications still. Each Form in Plato’s ontology must relate to every other Form by way of the Form of the different. From this it follows that, just as the Form of the same pervades all the other Forms, (in that each is identical to itself), the Form of the different also pervades all the other Forms, (in that each Form is different from every other). This implies, in some sense, that the different is co-constitutive, along with Plato’s Form of the same, of each other individual Form. After all, part of what makes a thing what it is, (and hence, self-same, or self-identical), is that it differs from everything that it is not. To the extent that the Form of the same makes any given Form what it is, it is commensurably different from every other Form that it is not.

This complication, however, reaches its apogee when we consider the Form of the same specifically. As we said, the Form of the different is defined by its being absolutely different from the Form of the same. The Form of the same differs from all other Forms as well. While, for instance, the Form of the beautiful participates in the Form of the same, (in that the beautiful is the same as the beautiful, or it is self-identical), nevertheless, the Form of the same is different from, (that is, it is not the same as) the Form of the beautiful. The Form of the same differs, similarly, from all other Forms. However, its difference from the Form of the different is a special relation. If the Form of the different is defined by its being absolutely different from the Form of the same, we can say reciprocally, that the Form of the same is defined by its being absolutely different from the Form of the different; it relates to the different through the different. But this means that, to the extent that the Form of the same is self-same, or self-identical, it is so because it differs absolutely from the Form of the different. This entails, however, that its self-sameness derives from its maximal participation in the Form of the different itself.

We see, then, the danger posed by Plato’s Form of the different, and hence, by any attempt to formulate a concept of difference itself. Plato’s Form of the same is ubiquitous throughout his ontology; it is, in a certain sense, the glue that holds together the rest of the Forms, even if in many of his Middle Period dialogues, it never makes an explicit appearance. Simply by understanding what a Form means for Plato, we can see the central role that the Form of the same plays for this, or for that matter, any other essentialist ontology. By simply introducing a Form of the different, and attempting to rigorously think through its implications, one can see that it threatens to fundamentally undermine the Form of the same itself, and hence by implication, difference threatens to devour the whole rest of the ontological edifice of essentialism. Plato, it seems, was playing with Heraclitean fire. It is likely largely for this reason that Aristotle, Plato’s greatest student, nixes the Form of the different in his Metaphysics.

d. Aristotle

In the Metaphysics, Aristotle attempts to correct what he perceives to be many of Plato’s missteps. For our purposes, what is most important is his treatment of the notion of difference. For Aristotle inserts into the discussion a presupposition that Plato had not employed, namely, that ‘difference’ may be said only of things which are, in some higher sense, identical. Where Plato’s Form of the different may be said to relate everything to everything else, Aristotle argues that there is a conceptual distinction to be made between difference and otherness.

For Aristotle, there are various ways in which a thing may be said to be one, in terms of: (1) Continuity; (2) Wholeness or unity; (3) Number; (4) Kind. The first two are a bit tricky to distinguish, even for Aristotle. By continuity, he means the general sense in which a thing may be said to be a thing. A bundle of sticks, bound together with twine, may be said to be one, even if it is a result of human effort that has made it so. Likewise, an individual body part, such as an arm or leg, may be said to be one, as it has an isolable functional continuity. Within this grouping, there are greater and lesser degrees to which something may be said to be one. For instance, while a human leg may be said to be one, the tibia or the femur, on their own, are more continuous, (in that each is numerically one, and the two of them together form the leg).

With respect to wholeness or unity, Aristotle clarifies the meaning of this as not differing in terms of substratum. Each of the parts of a man, (the legs, the arms, the torso, the head), may be said to be, in their own way, continuous, but taken together, and in harmonious functioning, they constitute the oneness or the wholeness of the individual man and his biological and psychological life. In this sense, the man is one, in that all of his parts function naturally together towards common ends. In the same respect, a shoelace, each eyelet, the sole, and the material comprising the shoe itself, may be said to be, each in their own way, continuous, while taken together, they constitute the wholeness of the shoe.

Oneness in number is fairly straightforward. A man is one in the organic sense above, but he is also one numerically, in that his living body constitutes one man, as opposed to many men. Finally, there is generic oneness, the oneness in kind or in intelligibility. There is a sense in which all human beings, taken together, may be said to be one, in that they are all particular tokens of the genus human. Likewise, humans, cats, dogs, lions, horses, pigs, and so forth, may all be said to be one, in that they are all types of the genus animal.

Otherness is the term that Aristotle uses to characterize existent things which are, in any sense of the term, not one. There is, as we said, a sense in which a horse and a woman are one, (in that both are types of the genus animal), but an obvious sense in which they are other as well. There is a sense in which my neighbor and I are one, (in that we are both tokens of the genus human), but insofar as we are materially, emotionally, and psychologically distinct, there is a sense in which I am other than my neighbor as well. There is an obvious sense in which I and my leg are one but there is also a sense in which my leg is other than me as well, (for if I were to lose my leg in an accident, provided I received prompt and proper medical attention, I would continue to exist). Every existent thing, Aristotle argues, is by its very nature either one with or other than every other existent thing.

But this otherness does not, (as it does for Plato’s Form of the different), satisfy the conditions for what Aristotle understands as difference. Since everything that exists is either one with or other than everything else that exists, there need not be any definite sense in which two things are other. Indeed, there may be, (as we saw above, my neighbor and I are one in the sense of tokens of the genus human, but are other numerically), but there need not be. For instance, you are so drastically other than a given place, say, a cornfield, that we need not even enumerate the various ways in which the two of you are other.

This, however, is the key for Aristotle: otherness is not the same as difference. While you are other than a particular cornfield, you are not different than a cornfield. Difference, strictly speaking, applies only when there is some definite sense in which two things may be said to differ, which requires a higher category of identity within which a distinction may be drawn: “For the other and that which it is other than need not be other in some definite respect (for everything that is existent is either other or same), but that which is different is different from some particular thing in some particular respect, so that there must be something identical whereby they differ. And this identical thing is genus or species…” (Metaphysics, X.3) In other words, two human beings may be different, (that is, one may be taller, lighter-skinned, a different gender, and so forth), but this is because they are identical in the sense that both are specific members of the genus ‘human.’ A human being may be different than a cat, (that is, one is quadripedal while the other is bipedal, one is non-rational while the other is rational, and so forth), but this is because they are identical in the sense that both are specified members of the genus ‘animal.’

But between these two, generic and specific, specific difference or contrariety is, according to Aristotle, the greatest, most perfect, or most complete. This assessment too is rooted in Aristotle’s emphasis on identity as the basis of differentiation. Differing in genus in Aristotelian terminology means primarily belonging to different categories of being, (substance, time, quality, quantity, place, relation, and so forth.) You are other than ‘5,’ to be sure, but Aristotle would not say that you are different from ‘5,’ because you are a substance and ‘5’ is a quantity and given that these two are distinct categories of being, for Aristotle there is not really a meaningful sense in which they can be said to relate, and hence, there is not a meaningful sense in which they can be said to differ. Things that differ in genus are so far distant (closer, really, to otherness), as to be nearly incomparable. However, a man may be said to be different than a cat, because the characteristics whereby they are distinguished from each other are contrarieties, occupying opposing sides of a given either/or, for instance, rational v. non-rational. Special difference or contrariety thus provides us with an affirmation or a privation, a ‘yes’ or a ‘no’ that constitutes the greatest difference, according to Aristotle. Differences in genus are too great, while differences within species are too minute and numerous (skin color, for instance, is manifested in an infinite myriad of ways), but special contrariety is complete, embodying an affirmation or negation of a particular given quality whereby genera are differentiated into species.

There are thus two senses in which, for Aristotle, difference is thought only in accordance with a principle of identity. First, there is the identity that two different things share within a common genus. (A rock and a tree are identical in that both are members of the genus, ‘substance,’ differentiated by the contrariety of ‘living/non-living.’) Second, there is the identity of the characteristic whereby two things are differentiated: material (v. non-material), living (v. non-living), sentient (v. non-sentient), rational (v. irrational), and so forth.

We see, then, that with Aristotle, difference becomes fully codified within the tradition as the type of empirical difference that we mentioned at the outset of this article: it is understood as a recognizable relation between two things which, prior to (and independently of) their relating, possess their own self-contained identities. This difference then is a way in which a thing A, (which is identical to itself) is not like a thing B (which is identical to itself), while both belong to a higher category of identity, (in the sense of an Aristotelian genus). Given the difficulties that we encountered with Plato’s attempt to think a Form of the different, it is perhaps little wonder that Aristotle’s understanding of difference was left unchallenged for nearly two and a half millennia.

2. Key Themes of Differential Ontology

As noted in the Introduction, differential ontology is a term that can be applied to two specific thinkers (Deleuze and Derrida) of the late twentieth century, along with those philosophers who have followed in the wakes of these two. It is, nonetheless, not applicable as a school of thought, in that there is not an identifiable doctrine or set of doctrines that define what they think. Indeed, for as many similarities that one can find between them, there are likely equally many distinctions as well. They work out of different philosophical traditions: Derrida primarily from the Hegel-Husserl-Heidegger triumvirate, with Deleuze, (speaking critically of the phenomenological tradition for the most part) focusing on the trinity of Spinoza-Nietzsche-Bergson. Theologically, they are interested in different sources, with Derrida giving constant nods to thinkers in the tradition of negative theology, such as Meister Eckhart, while Deleuze is interested in the tradition of univocity, specifically in John Duns Scotus. They have different attitudes toward the history of metaphysics, with Derrida working out of the Heideggerian platform of the supposed end of metaphysics, while Deleuze explicitly rejects all talk of any supposed end of metaphysics. They hold different attitudes toward their own philosophical projects, with Derrida coining the term, (following Heidegger’s Destruktion), deconstruction, while for Deleuze, philosophy is always a constructivism. In many ways, it is difficult to find two thinkers who are less alike than Deleuze and Derrida. Nevertheless, what makes them both differential ontologists is that they are working within a framework of specific thematic critiques and assumptions, and that on the basis of these factors, both come to argue that difference in itself has never been recognized as a legitimate object of philosophical thought, to hold that identities are always constituted, on the basis of difference in itself, and to explicitly attempt to formulate such a concept. Let us now look to these thematic, structural elements.

a. Immanence

The word immanence is contrasted with the word transcendence. “Transcendence” means going beyond, while “immanence” means remaining within, and these designations refer to the realm of experience. In most monotheistic religious traditions, for instance, which emphasize creation ex nihilo, God is said to be transcendent in the sense that He exists apart from His creation. God is the source of nature, but God is not, Himself, natural, nor is he found within anything in nature except perhaps in the way in which one might see reflections of an artist in her work of art. Likewise God does not, strictly speaking, resemble any created thing. God is eternal, while created beings are temporal; God is without beginning, while created things have a beginning; God is a necessary being, while created things are contingent beings; God is pure spirit, while created things are material. The creature closest in nature to God is the human being who, according to the Biblical book of Genesis, is created in the image of God, but even in this case, God is not to be understood as resembling human beings: “For my thoughts are not your thoughts, neither are my ways your ways…” (Isaiah 55:8).

In this sense, we can say that historically, the trend in Eastern philosophies and religions (which are not as radically differentiated as they are in the Western tradition), has always leaned much more in the direction of immanence than of transcendence, and definitely more so than nearly all strains of Western monotheism. In schools of Eastern philosophy that have some notion of the divine (and a great many of them do not), many if not most understand the divine as somehow embodied or manifested within the world of nature. Such a position would be considered idolatrous in most strains of Western monotheism.

With respect to the Western philosophical tradition specifically, we can say that, even in moments when religious tendencies and doctrines do not loom large, (like they do, for instance, during the Medieval period), there nevertheless remains a dominant model of transcendence throughout, though this transcendence is emphasized in greater and lesser degrees across the history of philosophy. There have been outliers, to be sure—Heraclitus comes to mind, along with Spinoza and perhaps David Hume, but they are rare. A philosophy rooted in transcendence will, in some way, attempt to ground or evaluate life and the world on the basis of principles or criteria not found (indeed not discoverable de jure) among the living or in the world. When Parmenides asserts that the object of philosophical thought must be that which is, and which cannot not be, which is eternal, unchanging, and indivisible, he is describing something beyond the realm of experience. When Plato claims that genuine knowledge is found only in the Form; when he argues in the Phaedo that the philosophical life is a preparation for death; that one must live one’s life turning away from the desires of the body, in the purification of the spirit; when he alludes, (through the mouth of Socrates) to life as a disease, he is basing the value of this world on a principle not found in the world. When St. Paul writes that “…the flesh lusts against the Spirit, and the Spirit against the flesh; and these are contrary to one another, so that you do not do the things you wish” (Galatians 5:17), and that “the mind governed by the flesh is death,” (Romans 8:6), he is evaluating this world against another. When René Descartes recognizes his activity of thinking and finds therein a soul; when John Locke bases Ideas upon a foundation of primary qualities, immanent, allegedly, to the thing, but transcendent to our experience; when Immanuel Kant bases phenomenal appearances upon noumenal realities, which, outside of space, time, and all causality, ever elude cognition; when an ethical thinker seeks a moral law, or an absolute principle of the good against which human behaviors may be evaluated; in each of these cases, a transcendence of some sort is posited—something not found within the world is sought in order to make sense of or provide a justification for this world.

Famously, Friedrich Nietzsche argued that the history of philosophy was one of the true world finally becoming a fable. Tracing the notion of the true world from its sagely Platonic (more accurately, Parmenidean) inception up through and beyond its Kantian (noumenal) manifestation, he demonstrates that as the demand for certainty (the will to Truth) intensifies, the so-called true world becomes less plausible, slipping further and further away, culminating in the moment he called “the death of God.” The true world has now been abolished, leaving only the apparent world. But the world was only ever called apparent by comparison with a purported true world (think here of Parmenides’ castigation of Heraclitus). Thus, when the true world is abolished, the apparent world is abolished as well; and we are left with only the world, nothing more than the world.

One of the key features of differential ontology will be the adoption of Nietzsche’s proclaimed (and reclaimed) enthusiasm for immanence. Deleuze and Derrida will, each in his own way, argue that philosophy must find its basis within and take as its point of departure the notion of immanence. As we shall see below, in Deleuze’s philosophy, this emphasis on immanence will take the form of his enthusiasm for the Scotist doctrine of the univocity of being. For Derrida, it will be his lifelong commitment to the phenomenological tradition, inspired by the vast body of research conducted over nearly half a century by Edmund Husserl, out of which Derrida’s professional research platform began, (and in which he discovers the notion of différance).

b. Time as Differential

Related to the privileging of immanence is the second principle of central importance to differential ontology, a careful and rigorous analysis of time. Such analysis, inspired by Edmund Husserl, will yield the discovery of a differential structure, which stands in opposition to the traditional understanding of time, the spatially organized, puncti-linear model of time. This refers to a conglomeration of various elements from Plato, Aristotle, St. Augustine, Boethius, and ultimately, the Modern period.

Few thinkers have attempted so rigorously as Aristotle to think the paradoxical nature of time. If we take the very basic model of time as past-present-future, Aristotle notes that one part of this (the past), has been but is no more, while another part of it (the future) will be but is not yet. There is an inherent difficulty, therefore, in trying to understand what time is, because it seems as though it is composed of parts made up of things that do not exist; therefore, we are attempting to understand what something that does not exist, is.

Furthermore, the present or the now itself, for Aristotle, is not a part of time, because a part is so called because of its being a constitutive element of a whole. However, time, Aristotle claims, is not made up of nows, in the same way that a line, though it has points, is not made up of points.

Likewise, the now cannot simply remain the same, but nor can it be said to be discrete from other nows and ever-renewed. For if the now is ever the same, then everything that has ever happened is contained within the present now, (which seems absurd); but if each now is discrete, and is constantly displaced by another discrete, self-contained now, the displacement of the old now would have to take place within (or simultaneously with) the new, incoming now, which would be impossible, as discrete nows cannot be simultaneous; hence time as such would never pass.

Aristotle will therefore claim that there is a sense in which the now is constantly the same, and another sense in which it is constantly changing. The now is, Aristotle argues, both a link of and the limit between future and past. It connects future and past, but is at the same time the end of the past and the beginning of the future; but the future and the past lie on opposite sides of the now, so the now cannot, strictly speaking, belong either to the past or to the future. Rather, it divides the past from the future. The essence of the now is this division—as such, the now itself is indivisible, “the indivisible present ‘now’.” (Physics IV.13). Insofar as each now succeeds another in a linear movement from future to past, the now is ever-changing—what is predicated of the now is constantly filled out in an ever-new way. But structurally speaking, inasmuch as the now is always that which divides and unites future and past, it is constantly the same.

Without the now, there would be no time, Aristotle argues, and vice versa. For what we call time is merely the enumeration that takes place in the progression of history between some moment, future or past, relative to the now moment: “For time is just this—number of motion in respect of ‘before’ and ‘after’.” (Physics, IV.11)

Here, then, are the elements that Aristotle leaves us with: an indivisible now moment that serves as the basis of the measure of time, which is a progression of enumeration taking place between moments, and a notion of relative distance that marks that progression of enumeration.

In Plato, St. Augustine, and Boethius, we find an important distinction between time and eternity: (it is important to note that Aristotle’s discussion of time is found in his Physics, not in his Metaphysics, because time, as the measure of change, belongs only to the things of nature, not to the divine). The reason that this is an important distinction for our purposes is that eternity, (for Plato, Augustine, and Boethius), is the perspective of the divine, while temporality is the perspective of creation. Eternity, for all three of these thinkers, does not mean passing through every moment of time in the sense of having always been there, and always being there throughout every moment of the future, (which is called ‘sempiternity’). All three of these philosophers view time as itself a created thing, and eternity, the perspective of the divine, stands outside of time.

Having created the entire spectrum of time, and standing omnisciently outside of time, the divine sees the whole of time, in an ever-present now. This complete fullness of the now is how Plato, St. Augustine, and Boethius understand eternity. Once we have made this move, however, it is a very short leap to the conclusion that, in a sense, all of time is ever-present; certainly not from our perspective, but from the perspective of the divine. In other words, right now, in an ever-present now, God is seeing the exodus of the Israelites, the sacking of Troy, the execution of Socrates, the crucifixion of Jesus, the fall of the Roman Empire, and the moment (billions of years from now) that the sun will become a Red Giant. Therefore, what we call the now is, on this model, no more or less significant, and no more or less NOW, than any other moment in time. It only appears to be so, from our very limited, finite perspectives. From the perspective of eternity, however, each present is equally present. Plato refers to time in the Timaeus as a “moving image of eternity.” (Timaeus, 37d)

The final piece of the puncti-linear model of time comes from the historical moment of the scientific revolution, with the conceptual birth of absolute time. On the Modern view, time is not the Aristotelian measure of change; rather, the measure of change is how we typically perceive time. Time, in and of itself, however, just is, in the same way that space, on the Modern view, just is—it is mathematical, objectively uniform and unitary, and the same in all places, its units (or moments) unfolding with precise regularity. Though the term “absolute time” was officially introduced by Sir Isaac Newton in his Philosophiae Naturalis Principia Mathematica (1687), as that which “from its own nature flows equably without regard to anything external,” it is nevertheless clear that the experiments and theories of both Johannes Kepler and Galileo Galilei (both of whom historically preceded Newton) assume a model of absolute time. None other than René Descartes, the philosopher who did more than any other to usher in the modern sciences, writes in the third of his famous Meditations on First Philosophy, (where he argues for the existence of God) “for the whole time of my life may be divided into an infinity of parts, each of which is in no way dependent on any other…”

The puncti-linear model of time, then, conceives the whole of time as a series of now-points, or moments, each of which makes up something like an atom of time (as the physical atom is a unit of space). Each of those moments is ontologically and logically independent of every other, with the present moment being the now-point most alive. The past, then, is conceived as those presents that have come and gone, while the future is conceived as those presents that have not yet come, and insofar as we speak of past and future moments as occupying points of greater and lesser distances with respect to the present and to each other as well, we are, whether we realize it or not, conceiving of time as a linear progression; thus when we attempt to understand the essence of time, we tend to conceptually spatialize it. This prejudice is most easily seen in the ease with which our mind leaps to timelines in order to conceptualize relations of historical events.

Edmund Husserl, whose 1904-1905 lectures On the Phenomenology of the Consciousness of Internal Time probably did more to shape the future of the continental tradition in philosophy in the twentieth century than any other single text, was also very influential on the issue of time consciousness. There, Husserl constructs a model of time consciousness that he calls “the living present.” Whether or not there is any such thing as real time, or absolute time is, for Husserl, one of those questions that is bracketed in the performance of the phenomenological epochē; time, for Husserl, as everything else, is to be analyzed in terms of its objective qualities, in other words, in terms of how it is lived by a constituting subject. Husserl’s point of departure is to object to the theory of experience offered by Husserl’s mentor, Franz Brentano. Assuming (though not making explicit) the puncti-linear model of time, Brentano distinguishes between two basic types of experience. The primary mode is perception, which is the consciousness of the present now-point. All modes of the past are understood in terms of memory, which is the imagined recollection or representation of a now-moment no longer present. If you are asked to call to mind your celebration of your tenth birthday, you are employing the faculty of memory. And every mode of experience dealing with the past (or the no-longer-present), is understood by Brentano as memory.

This understanding presents a problem, though. From the moment you began reading the first word of this sentence, from, until this moment right now, as you are reading these words, there is a type of memory being employed. Indeed, in order to genuinely experience any given experience as an experience, and not just a random series of moments, there must be some operation of memory taking place. To cognize a song as a song, rather than a random series of notes, we must have some memory of the notes that have come just before, and so on. However, the type of memory that is being used here seems to be qualitatively different than the sort of memory employed when you are encouraged to reflect upon your tenth birthday, or even, to reflect upon what you had for dinner last night. This type of reflection, (or representation) is a calling-back-to-mind of an event that was experienced at some point in the past, but has long since left consciousness, while the other (the sort of memory it takes to cognize a sentence or a paragraph, for instance), is an operative memory of an event that has not yet left consciousness. Both are modes of memory, to be sure, but they are qualitatively different modes of memory, Husserl argues.

Moreover, in each moment of experience, we are at the same time looking forward to the future in some mode of expectation. This, too, is something we experience on a frequent basis. For instance, when walking through a door that we walk through frequently, we might casually tap the handle and lead with our head and shoulders because we expect that the door, unlocked, will open for us as it always does; when sitting at the bus stop, as the bus approaches the curb, we stand, because we expect that it is our bus, and that it is stopping to let us on. Our expectations are not quite as salient as are our primary memories, but they are there. All it takes is a rupture of some sort—the door may be locked, causing us to hit our head; the bus may not be our bus, or the driver may not see us and may continue driving—to realize that the structure of expectation was present in our consciousness.

Time, Husserl argues, is not experienced as a series of discrete, independent moments that arise and instantly die; rather, experience of the present is always thick with past and future. What Aristotle refers to as the now, Husserl calls the ‘primal impression,’ the moment of impact between consciousness and its intentional object, which is ever-renewed, but also ever-passing away; but the primal impression is constantly surrounded by a halo of retention (or primary memory) and protention (primary expectation). This structure, taken altogether, is what Husserl calls, “the living present.”

Derrida and Deleuze will each employ (while subjecting to strident criticism) Husserl’s concept of the living present. If the present is always constituted as a relation of past to future, then the very nature of time is itself relational, that is to say, it cannot be conceived as points on a line or as seconds on a clock. If time is essentially or structurally relational, then everything we think about ‘things’ (insofar as things are constituted in time), and knowledge (insofar as it takes place in time), will be radically transformed as well. To fully think through the implications of Husserl’s discovery entails a fundamental reorientation toward time and along with it, being. Deleuze employs the retentional-protentional structure of time, while discarding the notion of the primal impression. Derrida will stick with the terms of Husserl’s structure, while demonstrating that the present in Husserl is always infected with or contaminated by the non-present, the structure of which Derrida calls différance.

c. Critique of Essentialist Metaphysics

In a sense it is difficult to talk in a synthetic way about what Deleuze and Derrida find wrong with traditional metaphysics, because they each, in the context of their own specific projects, find distinct problems with the history of metaphysics. However, the following is clear: (1) If we can use the term ‘metaphysics’ to characterize the attempt to understand the fundamental nature of things, and; (2) If we can acknowledge that traditionally the effort to do that has been carried out through the lenses of representational thinking in some form or other, then; (3) the critiques that Deleuze and Derrida offer against the history of metaphysical thinking are centered around the point that traditional metaphysics ultimately gets things wrong.

For Deleuze, this will come down to a necessary and fundamental imprecision that accompanies traditional metaphysics. Inasmuch as the task of metaphysics is to think the nature of the thing, and inasmuch as it sets for itself essentialist parameters for doing so, it necessarily filters its own understanding of the thing through conceptual representations, philosophy can never arrive at an adequate concept of the individual. To our heart’s desire, we may compound and multiply the concepts that we use to characterize a thing, but there will always necessarily be some aspect of that thing left untouched by thinking. Let us use a simple example, an inflated ball—we can describe it by as many representational concepts as we like: it is a certain color or a certain pattern, made of a certain material (rubber or plastic, perhaps), a certain size, it has a certain degree of elasticity, is filled with a certain amount of air, and so forth. However many categories or concepts we may apply to this ball, the nature of this ball itself will always elude us. Our conceptual characterization will always reach a terminus; our concepts can only go so far down. The ball is these characterizations, but it is also different from its characterizations as well. For Deleuze, if our ontology cannot reach down to the thing itself, if it is structurally and essentially incapable of comprehending the constitution of the thing, (as any substance metaphysics will be), then it is, for the most part, worthless as an ontology.

Derrida casts his critiques of the history of metaphysics in the Heideggerian language of presence. The history of philosophy, Derrida argues, is the metaphysics of presence, and the Western religious and philosophical tradition operates by categorizing being according to conceptual binaries: (good/evil, form/matter, identity/difference, subject/object, voice/writing, soul/body, mind/body, invisible/visible, life/death, God/Satan, heaven/earth, reason/passion, positive/negative, masculine/feminine, true/apparent, light/dark, innocence/fallenness, purity/contamination, and so forth.) Metaphysics consists of first establishing the binary, but from the moment it is established, it is already clear which of the two terms will be considered the good term and which the pejorative term—the good term is the one that is conceptually analogous to presence in either its spatial or temporal sense. The philosopher’s task, then, is to isolate the presence as the primary term, and to conceptually purge it of its correlative absent term.

A few examples will help elucidate this point: for Parmenides, divine wisdom entails an attempt to think that which is, at every present moment, the same. Heraclitean flux is the way of the masses. This in part shapes Plato’s emphasis on the eternality of the Form—while the material world changes with the passage of each new present, the Form remains the same, (ever-present or eternal); but in addition, the Form is also that which is closest to (a term of presence) the nature of the soul. In Plato the body, (given that it is in constant flux), is the prison of the soul, and in the Phaedo, life is declared a disease, for which death is the cure. In Aristotle’s Nicomachean Ethics, the most complete happiness for the human being lies in the life of contemplation, because the capacity for contemplation is that which makes us most like the gods, (who are ever the same). So we would be happiest if we could contemplate all the time; however, we unfortunately cannot (as we also have bodies and so, various familial, social, and political responsibilities). In Christianity, the flesh is subject to change (which is the very essence of corruptibility) while the Spirit is incorruptible (or ever-present); for Descartes, (and for the early phenomenological tradition), only what is spatially present (that is, immediate to consciousness), is indubitable. Whether or not the object of my present perception exists in the so-called real world, it is nevertheless indubitable that I am experiencing what I am experiencing, in the moment at which I am experiencing it—Descartes even goes so far as to define clarity (one of his conditions for ‘truth’) as that which is present. And for Descartes, the body (insofar as it is at least possible to doubt its existence), is not most essentially me; rather, my soul is what I am. We saw Aristotle’s emphasis on the now, or the present, as the yardstick against which time is measured. The spatial and temporal senses of the emphasis on presence are completely solidified in the phenomenology of Edmund Husserl, whose phenomenological reduction attempts to focus its attention exclusively on the phenomena of consciousness, because only then can it accord with the philosophical demand for apodicticity; and he understands this experience as constituted on the model of the living present. In each of these cases, a presence is valorized, and its correlative absence is suppressed.

As was the case with Deleuze, however, Derrida will argue that the self-proclaimed task of metaphysics ultimately fails. Thus, (and against some of his more dismissive critics), Derrida operates in the name of truth—when the history of metaphysics posits that presence is primary, and absence secondary, this claim, Derrida shows, is false. Metaphysics, in all of its manifestations, attempts to cast out the impure, but somehow, the impure always returns, in the form of a supplementary term; the secondary term is always ultimately required in order to supplement the primary term. Derrida’s project then is dedicated to demonstrating that if the subordinate term is required in order to supplement the so-called primary term, it can only be because the primary term suffers always and originally from a lack or a deficiency of some sort. In other words, the absence that philosophy sought to cast out was present and infecting the present term from the origin.

3. Key Differential Ontologists

a. Jacques Derrida as a Differential Ontologist

It is in the phenomenology of Edmund Husserl, as we said above, that Derrida discovers the constitutive play known as différance. In its effort to isolate ideal essences, constituted within the sphere of consciousness, phenomenology brackets or suspends all questions having to do with the real existence of the external world, the belief in which Husserl refers to as the natural attitude. The natural attitude is the non-thematic subjective attitude that takes for granted the real existence of the real world, absent and apart from my (or any) experience of it. Science or philosophy, in the mode of the natural attitude, ontologically distinguishes being from our perceptions of being, from which point it becomes impossible to ever again bridge the gap between the two. Try as it might, philosophy in the natural attitude can only ever have representations of being, and certainty (the telos of philosophical activity) becomes untenable. With this in mind, we get Husserl’s famous principle of all principles: “that everything originarily… offered to us in ‘intuition’ is to be accepted simply as what it is presented as being, but also only within the limits in which it is presented there.” (Ideas I, §24) Husserl thus proposes a change in attitude, in what he calls the phenomenological epochē, which suspends all questions regarding the external existence of the objects of consciousness, along with the constituting priority of the empirical self. Both self and world are bracketed, revealing the correlative structure of the world itself in relation to consciousness thereof, opening a sphere of pure immanence, or, in Derrida’s terminology, pure presence. It is for this reason that Husserl represents for Derrida the most strident defense of the metaphysics of presence, and it is for this reason that his philosophy also serves as the ground out of which the notion of constitutive difference is discovered.

In his landmark 1967 text, Voice and Phenomenon, Derrida takes on Husserl’s notion of the sign. The sign, we should note, is typically understood as a stand-in for something that is currently absent. Linguistically a sign is a means by which a speaker conveys to a listener the meaning that currently resides within the inner experience of the speaker. The contents of one person’s experience cannot be transferred or related to the experience of a listener except through the usage of signs, (which we call ‘language’). Knowing this, and knowing that Husserl’s philosophy is an attempt to isolate a pure moment of presence, it is little wonder that he wants, inasmuch as it is possible, to do away with the usage of signs, and isolate an interior moment of presence. It is precisely this aim that Derrida takes apart.

In the opening chapter of Husserl’s First Logical Investigation, titled “Essential Distinctions,” Husserl draws a distinction between different types or functions of signs: expressions and indications. He claims signs are always pointing to something, but what they point to can assume different forms. An indication signifies without pointing to a sense or a meaning. The flu or bodily infection, for instance, is not the meaning of the fever, but it is brought to our attention by way of the fever—the fever, that is, points to an ailment in the body. An expression, however, is a sign that is, itself, charged with conceptual meaning; it is a linguistic sign. There are countless types of signs—animal tracks in the snow point to the recent presence of life, a certain aroma in the house may indicate that a certain food or even a certain ethnicity of food, is being prepared—but expressions are signs that are themselves meaningful.

This distinction (indication/expression) is itself problematic, however, and does not seem to be fundamentally sustainable. An indication may very well be an expression, and expressions are almost always indications. If one’s significant other leaves one a note on the table, for instance, before one has even read the words on the paper, the sheer presence of writing, left in a certain way, on a sheet of paper, situated a certain way on the table, indicates: (1) That the beloved is no longer in the house, and; (2) That the beloved has left one a message to be read. These signs are both indications and expressions. Furthermore, every time we use an expression of some sort, we are indicating something, namely, we are pointing toward an ideal meaning, empirical states of affairs, and so forth. In effect, one would be hard pressed to find a single example of a use of an expression that was not, in some sense, indicative.

Husserl, however, will argue that even if, in fact, expressions are always caught up in an indicative function, that this has nothing to do with the essential distinction, obtaining de jure (if not de facto) between indications and expressions. Husserl cannot relinquish this conviction because, after all, he is attempting to isolate a pure moment of self-presence of meaning. So if expressions are signs charged with meaning, then Husserl will be compelled to locate a pure form of expression, which will require the absolute separation of the expression from its indicative function. Indeed he thinks that this is possible. The reason expressions are almost always indicative is that we use them to communicate with others, and in the going-forth of the sign into the world, some measure of the meaning is always lost—no matter how many signs we use to articulate our experience, the experience can never be perfectly and wholly recreated within the mind of the listener. So to isolate the expressive essence of the expression, we must suspend the going-forth of the sign into the world. This is accomplished in the soliloquy of the inner life of consciousness.

In one’s interior monologue, there is nothing empirical, (nothing from the world), and hence, nothing indicative. The signs themselves have only ideal, not real, existence. Likewise, the signs employed in the interior monologue are not indicative in the way that signs in everyday communication are. Communicative expressions point us to states of affairs or the internal experiences of another person; in short, they point us to empirical events. Expressions of the interior monologue, however, do not point us to empirical realities, but rather, Husserl claims that in the interior expression, the sign points away from itself, and toward its ideal sense. For Husserl, therefore, the purest, most meaningful mode of expression is one in which nothing is, strictly speaking, expressed to anyone.

One might nevertheless wonder, is it not the case, that when one ‘converses’ internally with oneself, that one is, in some sense, articulating meaning to oneself? Here is a mundane example, one which has happened to each of us at some point in time: we walk into a room, and forget why we have entered the room. “Why did I come in here?” we might silently utter to ourselves, and after a moment, we might say to ourselves, “Ah, yes, I came in here to turn down the thermostat,” or something of the like. Is it not the case that the individual is communicating something to herself in this monologue?

Husserl responds in the negative. The signs we are using are not making known to the self a content that was previously inaccessible to the self, (which is what takes place in communication). In pointing away from itself and directly toward the sense, the sense is not conveyed from a self to a self, but rather, the sense of the expression is experienced by the subject at exactly that same moment in time.

This is where Derrida brings into the discussion Husserl’s notion of the living present, for this emphasis on the exact same moment in time relies upon Husserl’s notion of the primal impression. Derrida writes, “The sharp point of the instant, the identity of lived-experience present to itself in the same instant bears therefore the whole weight of this demonstration.” (Voice and Phenomenon, 51). In our discussion above of Husserl’s living present, we saw that the primal impression is constantly displaced by a new primal impression—it is in constant motion. Nevertheless, this does not keep Husserl from referring to the primal impression in terms of a point; it is, he says, the source-point on the basis of which retention is established. While the primal impression is always, as we saw with Husserl, surrounded by a halo of retention and protention, nevertheless this halo is always thought from the absolute center of the primal impression, as the source-point of experience. It is the “non-displaceable center, an eye or living nucleus” of temporality. (Voice and Phenomenon, 53)

This, we note, is the Husserlian manifestation of the emphasis on the temporal sense of presence in the philosophical tradition. Here, we should also note: Derrida never attempts to argue that philosophy should move away from the emphasis on presence. The philosophical tradition is defined by, Derrida claims, its emphasis on the present; the present provides the very foundation of certainty throughout the history of philosophy; it is certainty, in a sense. The present comprises an ineliminable essential element of the whole endeavor of philosophy. So to call it into question is not to try to bring a radical transformation to philosophy, but to shift one’s vantage point to one that inhabits the space between philosophy and non-philosophy. Indeed, Derrida motions in this direction, which is one of the reasons that Derrida is more comfortable than many traditional academic philosophers writing in the style of and in communication with literary figures. The emphasis on presence within philosophy, strictly speaking is incontestable.

Husserl, we saw, formulated his notion of the living present on the basis of his insistence on a qualitative distinction between retention as a mode of memory still connected to the present moment of consciousness, and representational memory, that deliberately calls to mind a moment of the past that has, since its occurrence, left consciousness. This means, for Husserl, that retention must be understood in the mode of Presentation, as opposed to Representation. Retention is a direct intuition of what has just passed, directly presented, and fully seen, in the moment of the now, as opposed to represented memory, which is not. Retention is not, strictly speaking, the present; the primal impression is the present. Nevertheless, retention is still attached to the moment of the now. Furthermore, there is a sense in which retention is necessary to give us the experience of the present as such. The primal impression, where the point of contact occurs, is in a constant mode of passing away, and the impression only becomes recognizable in the mode of retention—as we said, to truly experience a song as a song entails that one must keep in one’s memory the preceding few notes; but this is just another way of saying that the present is constituted in part on the basis of memory, even if memory of what has just passed.

Derrida diagnoses, then, a tension operating at the heart of Husserl’s thinking. On the one hand, Husserl’s phenomenology requires the sharp point of the instant in order to have a pure moment of self-presence, wherein meaning, without the intermediary of signs, can be found. In this sense, retention, though primary memory, is memory nonetheless, and does not give us the present, but is rather, Husserl claims, the “antithesis of perception.” (On the Phenomenology of the Consciousness of Internal Time, 41) Nevertheless, the present as such is only ever constituted on the basis of its becoming concretized and solidified in the acts that constitute the stretching out of time, in retention. Moreover, given that retention is still essentially connected to the primal impression, (which is itself always in the mode of passing away) there is not a radical distinction between retention and primal impression; rather, they are continuous, and the primal impression is really only, Husserl claims, an ideal limit. There is thus a continual accommodation of Husserlian presence to non-presence, which entails the admission of a form of otherness into the self-present now-point of experience. This accommodation is what keeps fundamentally distinct memory as retention and memory as representation.

Nevertheless, the common root, making possible both retention and representational memory, is the structural possibility of repetition itself, which Derrida calls the trace. The trace is the mark of otherness, or the necessary relation of interiority to exteriority. Husserl’s living present is marked by the structure of the trace, Derrida argues, because the primal impression for Husserl, never occurs without a structural retention attached to it. Thus, in the very moment, the selfsame point of time, when the primal impression is impressed within experience, what is essentially necessary to the structure, (in other words, not an accidental feature thereof), is the repeatability of the primal impression within retention. To be experienced in a primal impression therefore requires that the object of experience be repeatable, such that it can mark itself within the mode of retention, and ultimately, representational memory. Thus the primal impression is traced with exteriority, or non-presence, before it is ever empirically stamped.

On the basis of this trace that constitutes the presence of the primal impression, Derrida introduces the concept of différance:

[T]he trace in the most universal sense, is a possibility that must not only inhabit the pure actuality of the now, but must also constitute it by means of the very movement of the différance that the possibility inserts into the pure actuality of the now. Such a trace is, if we are able to hold onto this language without contradicting it and erasing it immediately, more ‘originary’ than the phenomenological originarity itself… In all of these directions, the presence of the present is thought beginning from the fold of the return, beginning from the movement of repetition and not the reverse. Does not the fact that this fold in presence or in self-presence is irreducible, that this trace or this différance is always older than presence and obtains for it its openness, forbid us from speaking of a simple self-identity ‘im selben Augenblick’? (Voice and Phenomenon, 58)

Différance, Derrida says, is a movement, a differentiating movement, on the basis of which the presence (the ground of philosophical certainty) is opened up. The self-presence of subjectivity, in which certainty is established, is inseparable from an experience of time, and the structural essentialities of the experience of time are marked by the trace. It is more originary than the primordiality of phenomenological experience, because it is what makes it possible in the first place. From this it follows, Derrida claims, that Husserl’s project of locating a moment of pure presence will be necessarily thwarted, because in attempting to rigorously think it through, we have found hiding behind it this strange structure signifying a movement and hence, not a this, that Derrida calls différance.

Using Derrida’s terminology, (which we shall presently dissect), we can say that différance is the non-originary, constituting-disruption of presence. Let us take this bit by bit. Différance is constituting—this signifies, as we saw in Husserl, that it is on the basis of this movement that presence is constituted. Derrida claims that it is the play of différance that makes possible all modes of presence, including the binary categories and concepts in accordance with which philosophy, since Plato, has conducted itself. That it is constitutive does not, however, mean that it is originary, at least not without qualification. To speak of origins, for Derrida, implies an engagement with a presumed moment of innocence or purity, in other words, a moment of presence, from which our efforts at meaning have somehow fallen away. Derrida says, rather, that différance is a “non-origin which is originary.” (“Freud and the Scene of Writing,” Writing and Difference, 203) Différance is, in a sense, an origin, but one that is already, at the origin, contaminated; hence it is a non-originary origin.

At the same time, because différance as play and movement always underlie the constitutive functioning of philosophical concepts, it likewise always attenuates this functioning, even as it constitutes it. Différance prevents the philosophical concepts from ever carrying out fully the operations that their author intends them to carry out. This constituting-disruption is the source of one of Derrida’s more (in)famous descriptions of the deconstructive project: “But this condition of possibility turns into a condition of impossibility.” (Of Grammatology, 74)

Différance attenuates both senses of presence to which we referred above: (1) Spatially, it differs, creating spaces, ruptures, chasms, and differences, rather than the desired telos of absolute proximity; (2) Temporally, it defers, delaying presence from ever being fully attained. Thus it is that Derrida, capitalizing on the “two motifs of the Latin differre…” will understand différance in the dual sense of differing and deferring (“Différance,” in Margins of Philosophy, 8)